VAMboozled!: A North Carolina Teacher’s Guest Post on His/Her EVAAS Scores

A teacher from the state of North Carolina recently emailed me for my advice regarding how to help him/her read and understand his/her recently received Education Value-Added Assessment System (EVAAS) value added scores. You likely recall that the EVAAS is the model I cover most on this blog, also in that this is the system I have researched the most, as well as the proprietary system adopted by multiple states (e.g., Ohio, North Carolina, and South Carolina) and districts across the country for which taxpayers continue to pay big $. Of late, this is also the value-added model (VAM) of sole interest in the recent lawsuit that teachers won in Houston (see here).

You might also recall that the EVAAS is the system developed by the now late William Sanders (see here), who ultimately sold it to SAS Institute Inc. that now holds all rights to the VAM (see also prior posts about the EVAAS here, here, here, here, here, and here). It is also important to note, because this teacher teaches in North Carolina where SAS Institute Inc. is located and where its CEO James Goodnight is considered the richest man in the state, that as a major Grand Old Party (GOP) donor “he” helps to set all of of the state’s education policy as the state is also dominated by Republicans. All of this also means that it is unlikely EVAAS will go anywhere unless there is honest and open dialogue about the shortcomings of the data.

Hence, the attempt here is to begin at least some honest and open dialogue herein. Accordingly, here is what this teacher wrote in response to my request that (s)he write a guest post:

***

SAS Institute Inc. claims that the EVAAS enables teachers to “modify curriculum, student support and instructional strategies to address the needs of all students.” My goal this year is to see whether these claims are actually possible or true. I’d like to dig deep into the data made available to me — for which my state pays over $3.6 million per year — in an effort to see what these data say about my instruction, accordingly.

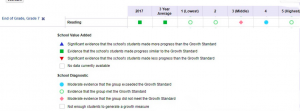

For starters, here is what my EVAAS-based growth looks like over the past three years:

As you can see, three years ago I met my expected growth, but my growth measure was slightly below zero. The year after that I knocked it out of the park. This past year I was right in the middle of my prior two years of results. Notice the volatility [aka an issue with VAM-based reliability, or consistency, or a lack thereof; see, for example, here].

Notwithstanding, SAS Institute Inc. makes the following recommendations in terms of how I should approach my data:

Reflecting on Your Teaching Practice: Learn to use your Teacher reports to reflect on the effectiveness of your instructional delivery.

The Teacher Value Added report displays value-added data across multiple years for the same subject and grade or course. As you review the report, you’ll want to ask these questions:

- Looking at the Growth Index for the most recent year, were you effective at helping students to meet or exceed the Growth Standard?

- If you have multiple years of data, are the Growth Index values consistent across years? Is there a positive or negative trend?

- If there is a trend, what factors might have contributed to that trend?

- Based on this information, what strategies and instructional practices will you replicate in the current school year? What strategies and instructional practices will you change or refine to increase your success in helping students make academic growth?

Yet my growth index values are not consistent across years, as also noted above. Rather, my “trends” are baffling to me. When I compare those three instructional years in my mind, nothing stands out to me in terms of differences in instructional strategies that would explain the fluctuations in growth measures, either.

So let’s take a closer look at my data for last year (i.e., 2016-2017). I teach 7th grade English/language arts (ELA), so my numbers are based on my students reading grade 7 scores in the table below.

What jumps out for me here is the contradiction in “my” data for achievement Levels 3 and 4 (achievement levels start at Level 1 and top out at Level 5, whereas levels 3 and 4 are considered proficient/middle of the road). There is moderate evidence that my grade 7 students who scored a Level 4 on the state reading test exceeded the Growth Standard. But there is also moderate evidence that my same grade 7 students who scored Level 3 did not meet the Growth Standard. At the same time, the number of students I had demonstrating proficiency on the same reading test (by scoring at least a 3) increased from 71% in 2015-2016 (when I exceeded expected growth) to 76% in school year 2016-2017 (when my growth declined significantly). This makes no sense, right?

Hence, and after considering my data above, the question I’m left with is actually really important: Are the instructional strategies I’m using for my students whose achievement levels are in the middle working, or are they not?

I’d love to hear from other teachers on their interpretations of these data. A tool that costs taxpayers this much money and impacts teacher evaluations in so many states should live up to its claims of being useful for informing our teaching.

This blog post has been shared by permission from the author.

Readers wishing to comment on the content are encouraged to do so via the link to the original post.

Find the original post here:

The views expressed by the blogger are not necessarily those of NEPC.