Jersey Jazzman: Things Education "Reformers" Still Don't Understand About Testing

There was a new report out last week from the education "reform" group JerseyCAN, the local affiliate of 50CAN. In an op-ed at NJ Spotlight, Executive Director Patricia Morgan makes an ambitious claim:

New Jersey students have shown significant improvements in English Language Arts (ELA) and math across the grade levels since we adopted higher expectations for student learning and implemented a more challenging exam. And these figures are more than just percentages. The numbers represent tens of thousands more students reading and doing math on grade level in just four years.

None of this has happened by accident. For several decades, our education and business community leaders have come together with teachers and administrators, parents and students, and other stakeholders to collaborate on a shared vision for the future. Together, we’ve agreed that our students and educators are among the best in the nation and are capable of achieving to the highest expectations. We’ve made some positive changes to the standards and tests in recent years in response to feedback from educators, students, and families, but we’ve kept the bar high and our commitment strong to measuring student progress toward meeting that bar.

A New Jersey high school diploma is indeed becoming more meaningful, as evidenced by the academic gains we’ve see year over year and the increase in students meeting proficiency in subjects like ELA 10 and Algebra I. Our state is leading the nation in closing ELA achievement gaps for African American and Hispanic students since 2015. [emphasis mine]

This is a causal claim: according to Morgan, academic achievement in New Jersey is rising because the state implemented a tougher test based on tougher standards. If there's any doubt that this is JerseyCAN's contention, look at the report itself:

The name of the new exam, the Partnership for Assessment of Readiness for College and Careers, or PARCC, has become a political lightning rod that has co-opted the conversation around the need for an objective measure of what we expect from our public school graduates.

This report is not about the politics of PARCC, but rather the objective evidence that our state commitment to high expectations is bearing fruit. As we imagine the next generation of New Jersey’s assessment system, we must build on this momentum by focusing on improvements that will help all students and educators without jeopardizing the gains made across the state.

That's their emphasis, not mine, and the message is clear: implementing the PARCC is "bearing fruit" in the form of better student outcomes. Further down, the report points to a specific example of testing itself driving better student outcomes:

Educators and district and state leaders also need many forms of data to identify students who need additional support to achieve to their full potential, and to direct resources accordingly. New Jersey’s Lighthouse Districts offer one glimpse into the way educators have used assessment and other data to inform instruction and improve student outcomes. These seven diverse school districts were named by the New Jersey Department of Education (NJDOE) in 2017 for their dramatic improvements in student math and ELA performance over time.29

The K-8 Beverly City district, for example, has used assessment data over the past several years as part of a comprehensive approach to improve student achievement. Since 2015, Beverly City has increased the students meeting or exceeding expectations by 20 percentage points in ELA and by 15 in math.30

These districts and schools have demonstrated how test results are not an endpoint, but rather a starting point for identifying areas of strength and opportunities for growth in individual students as well as schools and districts. This emphasis on using data to improve instruction is being replicated in schools across the state — schools that have now invested five years in adjusting to higher expectations and working hard to prepare students to meet them. [emphasis mine]

Go through the entire report and you'll note there is no other claim made as to why proficiency rates have risen over the past four years: the explicit argument here is that implementing PARCC has led to significant improvements in student outcomes.

Before I continue, stop for a minute and ask yourself this: does this argument, on its face, make sense?

Think about it: what JerseyCAN is claiming is that math and English Language Arts (ELA) instruction wasn't as good as it could have been a mere 4 years ago. And all that was needed was a tougher test to improve students' ability to read and do math -- that's it.

The state didn't need to deploy more resources, or improve the lives of children outside of school, or change the composition of the teaching workforce, or anything that would require large-scale changes in policy. All that needed to happen was for New Jersey to put in a harder test.

This is JerseyCAN's theory; unfortunately, it's a theory that shows very little understanding of what tests are and what they can do. There are at least three things JerseyCAN failed to consider before making their claim:

1) Test outcomes will vary when you change who takes the test.

JerseyCAN's report acknowledges that the rate of students who opt-out of taking the test has declined:

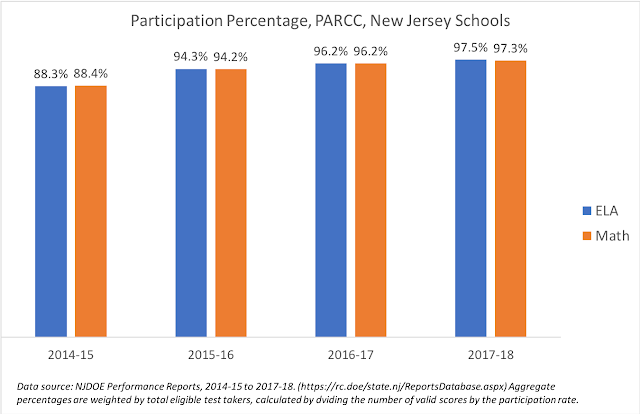

When the PARCC exam first rolled out in 2014-15, it was met with opposition from some groups of educators and parents. This led to an opt-out movement where parents refused to let their children sit for the test. Over the past four years, this trend has sharply declined. The chart below shows a dramatic increase in participation rates in a sample of secondary schools across four diverse counties. As schools, students, and families have become more comfortable with the assessment, participation has grown significantly.31

We'll come back to that last sentence in a bit; for now, let's acknowledge that JerseyCAN is right: participation rates for the PARCC have been climbing. We don't have good breakdowns on the data, so it's hard to know if the participation rates are growing consistently across all grades and types of students. In the aggregate, however, there's no question a higher percentage of students are taking the tests.

The graph above shows participation rates in the past 4 years have climbed by 10 percentage points. Nearly all eligible students are now taking the tests.

So here's the problem: if the opt-out rates are lower, that means the overall group of students taking the test each year is different from the previous group. And if there is any correlation between opting-out and student achievement, it will bias the average outcomes of the test.

Put another way: if higher achieving kids were opting-out in 2015 but are now taking the test, the test scores are going to rise. But that isn't because of superior instruction; it's simply that the kids who weren't taking the test now are.

Is this the case? We don't know... and if we don't know, we should be very careful to make claims about why test outcomes are improving.

2) Test outcomes will vary due to factors other than instruction.

We've been over this a thousand times on this blog, so I won't have to post yet another slew of scatterplots when I state: there is an iron-clad correlation between student socio-economic status and test scores. Which means that changes in economic conditions will likely have an effect on aggregate test outcomes.

When PARCC was implemented, New Jersey was just starting to come out of the Great Recession. Over the next four years, the poverty rate declined significantly; see the graph above.* Was this the cause of the rise in proficiency rates? Again, we don't know... which is why, again, JerseyCAN shouldn't be making claims about why proficiency rates rose without taking things like poverty into account.

These last two points are important; however, this next one is almost certainly the major cause of the rise in New Jersey PARCC proficiency rates:

3) Test outcomes rise as test takers and teachers become more familiar with the form of the test.

All tests are subject to construct-irrelevant variance, which is a fancy way of saying that test outcomes vary because of things other than students' abilities we are attempting to measure.

Think about a test of mathematics ability, for example. Now imagine a non-native English speaker taking that test in English. Will it be a good measure of what we're trying to measure -- mathematical ability? Or will the student's struggles with language keep us from making a valid inference about that student's math skills?

We know that teachers are feeling the pressure to have students perform well on accountability tests. We have evidence that teachers will target instruction on those skills that are emphasized in previous versions of a test, to the exclusion of skills that are not tested. We know that teaching to the test is not necessarily the same as teaching to a curriculum.

It is very likely, therefore, that teachers and administrators in New Jersey, over the last four years, have been studying the PARCC and figuring out ways to pump up scores. This is, basically, score inflation: The outcomes are rising not because the kids are better readers and mathematicians, but because they are better test takers.

Now, you might think this is OK -- we can debate whether it is. What we shouldn't do is make any assumption that increases in proficiency rates -- especially modest increases like the ones in New Jersey over the last four years -- are automatically indicative of better instruction and better student learning.

Almost certainly, at least part of these gains are due to schools having a better sense of what is on the tests, and adjusting instruction accordingly. Students are also more comfortable with taking the tests on computers, thus boosting scores. Again: this isn't the same as the kids gaining better math and reading skills; they're gaining better test-taking skills. And JerseyCAN themselves admit students "...have become more comfortable with the assessment." If that's the case, what did they think was going to happen to the scores?

It wasn't so long ago that New York State had to admit its increasingly better test outcomes were nothing more than an illusion. Test outcomes -- especially proficiency rates -- turned out to wildly exaggerate the progress the state's students had allegedly been making. You would think, after that episode, that people who position themselves as experts on education policy would exercise caution in interpreting proficiency rate gains immediately after introducing a new test.

I've been in the classroom for a couple of decades, and I will tell you this: improving instruction and student learning is a long, grinding process. It would be great if simply setting higher standards for kids and giving them tougher tests was some magic formula for educational success -- it isn't.

I'm all for improving assessments. The NJASK was, by all accounts, pretty crappy. I have been skeptical about the PARCC, especially when the claims made by its adherents have been wildly oversold -- particularly the claims about its usefulness in informing instruction. But I'm willing to concede it was probably better than the older test. We should make sure whatever replaces the PARCC is yet another improvement.

The claim, however, that rising proficiency rates in a new exam are proof of that exam's ability to improve instruction is just not warranted. Again, it's fine to advocate for better assessments. But I wish JerseyCAN would deploy some of its very considerable resources toward advocating for a policy that we know will help improve education in New Jersey: adequate and equitable funding for all students.

Because we can keep raising standards -- but without the resources required, the gains we see in tests are likely just a mirage.

ADDING: I probably shouldn't get into such a complex topic in a footnote to a blog post...

Yes, there is evidence accountability testing has, to a degree, improved student outcomes. But the validity evidence for showing the impact of testing on learning is... another test. It seems to me it's quite likely the test-taking skills acquired in a regime like No Child Left Behind transfer to other tests. In other words: when you learn to get a higher score on your state test, you probably learn to get a higher score on another test, like the NAEP. That doesn't mean you've become a better reader or mathematician; maybe you're just a better test taker.

Again: you can argue test-taking skills are valuable. But making a claim that testing, by itself, is improving student learning based solely on test scores is inherently problematic.

In any case, a reasonable analysis is going to factor in the likelihood of score inflation as test takers get comfortable with the test, as well as changes in the test-taking population.

* That dip in 2012 is hard to explain, other than some sort of data error. It's fairly obvious the decrease in poverty over recent years, however, is real.

This blog post has been shared by permission from the author.

Readers wishing to comment on the content are encouraged to do so via the link to the original post.

Find the original post here:

The views expressed by the blogger are not necessarily those of NEPC.